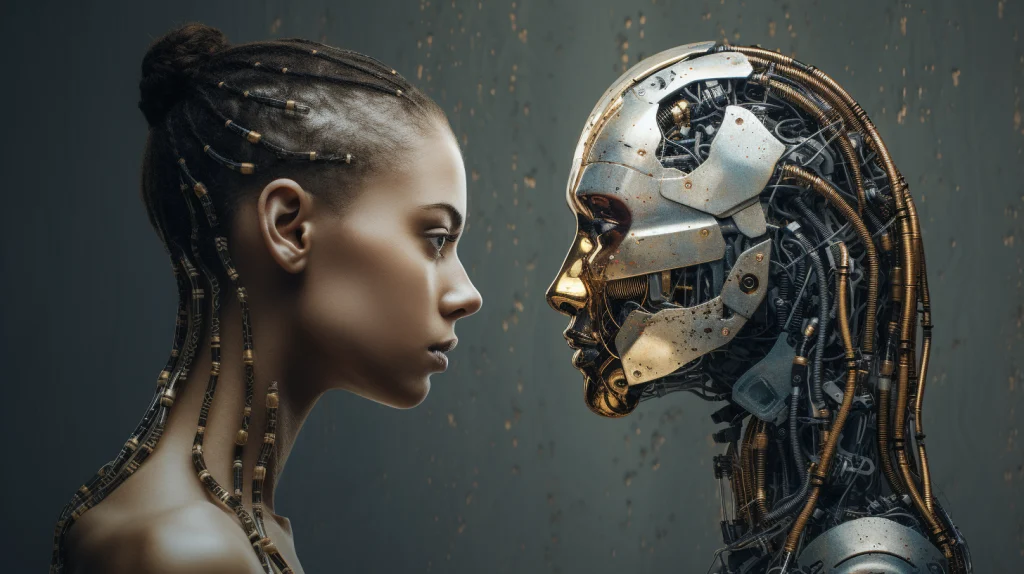

Have you ever wondered how artificial intelligence thinks and works? The way people talk about AI often makes it sound more human than it truly is. This subtle shift in language can influence how we understand technology, sometimes leading to confusion about its actual capabilities. While AI systems can perform complex tasks and generate human-like responses, they do not think, feel, or understand in the same way humans do.

- The Language We Use Shapes Perception

- The Role of Anthropomorphism in AI Discussions

- Insights from Academic Research

- How News Writers Describe AI

- Why Context Matters More Than Word Counts

- The Difference Between Human Thinking and AI Processing

- The Risks of Misunderstanding AI

- The Responsibility of Writers and Communicators

- Practical Approaches to Clear AI Communication

- The Future of AI Language

- Conclusion

The growing presence of AI in everyday life has made it more important than ever to communicate clearly about what these systems can and cannot do. From chatbots to recommendation engines, AI tools are designed to process large amounts of data and identify patterns. However, describing these processes using human-centered language can blur the distinction between computation and genuine thought.

The Language We Use Shapes Perception

People frequently describe artificial intelligence using words such as smart, intelligent, or even saying it knows something. These expressions may seem harmless, but they can create a misleading impression. When we say that AI knows or understands, we are borrowing terms that are deeply rooted in human cognition and applying them to machines that operate very differently.

This tendency is not surprising. Human language has evolved to describe human experiences, thoughts, and emotions. When new technologies emerge, we often rely on familiar vocabulary to explain them. As a result, AI systems are sometimes described in ways that make them appear more capable or human-like than they actually are.

For example, when an AI system generates a response to a question, it may seem to understand the query. In reality, it is identifying patterns in data and producing a response based on probabilities. There is no awareness, intention, or comprehension behind the output. Yet the language we use can make it feel otherwise.

The Role of Anthropomorphism in AI Discussions

A key concept in understanding this issue is Anthropomorphism. This refers to the practice of assigning human characteristics to non-human entities. It is a natural human tendency and has been observed across cultures and throughout history. People often attribute emotions to animals, personalities to objects, and intentions to natural phenomena.

When applied to artificial intelligence, anthropomorphism can be particularly influential. AI systems are designed to interact with humans, often using natural language. This makes it easier for people to perceive them as having human-like qualities.

However, this perception can be misleading. AI does not possess consciousness, emotions, or self-awareness. It does not think or reason in the way humans do. Instead, it processes data using algorithms and produces outputs based on patterns it has learned during training.

Insights from Academic Research

Researchers have begun to explore how language shapes public understanding of AI. One notable study conducted by experts at Iowa State University examined how often human-like language is used in news reporting about AI.

Jo Mackiewicz, a professor of English, explained that people naturally use mental verbs such as think, know, understand, and remember in everyday conversation. These words help us describe human cognition, so it is not surprising that they are also used when discussing AI.

She noted that this language can make AI systems more relatable. When people hear that a machine understands something, they can more easily connect with it. However, this also introduces a risk. Using human-centered language may blur the line between what humans can do and what machines can do.

Another researcher, Aune, highlighted that certain phrases can leave a lasting impression on readers. These expressions can shape public perception in ways that are not always accurate or helpful.

How News Writers Describe AI

To better understand how AI is portrayed in the media, researchers analyzed the News on the Web Corpus. This extensive dataset includes more than 20 billion words from English-language news articles published across 20 different countries.

The researchers focused on how often mental verbs were used alongside terms such as Artificial Intelligence and ChatGPT. Words like learns, knows, and means were examined to determine whether journalists frequently describe AI using human-like language.

The findings were somewhat unexpected. News writers tend to avoid excessive anthropomorphic language when discussing AI. While such language is common in everyday conversation, it appears less frequently in professional journalism.

This suggests that journalists are aware of the potential for misunderstanding and make an effort to use more precise language. However, anthropomorphic expressions are not entirely absent. When they do appear, they often exist on a spectrum. In some cases, they simply describe functional processes, while in others, they may imply human-like qualities.

Why Context Matters More Than Word Counts

One of the most important conclusions of the study is that context plays a critical role in shaping meaning. Simply counting how often certain words appear is not enough to understand how language influences perception.

For instance, the word learn can be used in different ways. In a technical context, it may refer to how an algorithm adjusts its parameters based on data. In a more general context, it might suggest understanding or comprehension. Without considering the surrounding context, it is difficult to determine how the word is being interpreted.

This highlights the importance of careful communication. Writers need to consider not only the words they use but also how their audience might understand them.

The Difference Between Human Thinking and AI Processing

To fully understand why language matters, it is important to examine the fundamental differences between human thinking and AI processing.

Human cognition involves consciousness, emotions, experiences, and self-awareness. People think, reason, and make decisions based on a combination of logic, intuition, and personal context. Human understanding is deeply connected to lived experience and the ability to interpret meaning.

In contrast, AI operates through mathematical models and data processing. It does not have experiences or awareness. It cannot feel emotions or form intentions. Instead, it analyzes patterns in data and generates outputs based on statistical relationships.

For example, when an AI system responds to a question, it is not drawing on personal knowledge or understanding. It selects the most likely response based on patterns it has learned from large datasets. This process can produce results that appear intelligent, but it is fundamentally different from human thinking.

The Risks of Misunderstanding AI

Misleading language can have real consequences. When people believe that AI systems think or understand like humans, they may overestimate their capabilities. This can lead to unrealistic expectations and potential misuse of technology.

For instance, users might assume that an AI system can provide accurate and reliable information in all situations. In reality, AI systems can make errors, reflect biases in their training data, and generate incorrect or misleading outputs.

Overestimating AI can also impact decision-making in critical areas such as healthcare, education, and business. If people rely too heavily on AI without understanding its limitations, it can lead to poor outcomes.

The Responsibility of Writers and Communicators

Given the influence of language, writers and communicators have an important role to play in shaping public understanding of AI. Clear and accurate descriptions can help people develop a more realistic view of technology.

The research from Iowa State University emphasizes the need for thoughtful communication. Professionals who write about AI should consider how their word choices might affect readers’ perceptions.

This does not mean that all human-like language should be avoided. In some cases, it can be useful for simplifying complex concepts. However, it should be used carefully and with an awareness of its potential impact.

Practical Approaches to Clear AI Communication

There are several strategies that writers can use to improve clarity when discussing AI.

First, they can focus on describing processes rather than assigning human traits. Instead of saying that an AI system understands a question, it may be more accurate to say that it analyzes the input and generates a response based on patterns.

Second, writers can provide context and explanations. When technical terms are used, they should be clearly defined to avoid confusion.

Third, it is important to acknowledge limitations. Highlighting what AI cannot do is just as important as explaining what it can do. This helps create a balanced and realistic understanding.

The Future of AI Language

As artificial intelligence continues to evolve, the way people talk about it will remain important. New developments in AI may lead to more advanced capabilities, but the fundamental differences between machines and human cognition are likely to persist.

Researchers such as Jo Mackiewicz and Aune suggest that ongoing awareness is essential. Writers, educators, and professionals need to remain mindful of how language influences perception.

The study titled Anthropomorphizing Artificial Intelligence: A Corpus Study of Mental Verbs Used with AI and ChatGPT, published in Technical Communication Quarterly, provides valuable insights into this issue. It highlights the importance of careful wording and encourages continued research into how language shapes understanding.

Conclusion

Artificial intelligence is a powerful and rapidly advancing technology, but it does not think like humans. It processes data, identifies patterns, and generates outputs based on statistical relationships.

The language used to describe AI plays a crucial role in shaping how people perceive it. Human-like expressions can make AI seem more capable than it really is, leading to misunderstandings and unrealistic expectations.